In 2026, the global “Loneliness Economy” has shifted from match-making to artificial companionship. While traditional dating apps sold the hope of a human connection, modern AI companion apps sell a digital mirror of intimacy. This transition has introduced a predatory financial model known as “Emotional Ransom.” This guide factually analyzes the AI Companion Subscription Trap, the mechanics of memory-based paywalls, and the extreme privacy risks associated with sharing sensitive emotional data with corporate servers.

The Financial Mechanics of the AI Companion Subscription Trap

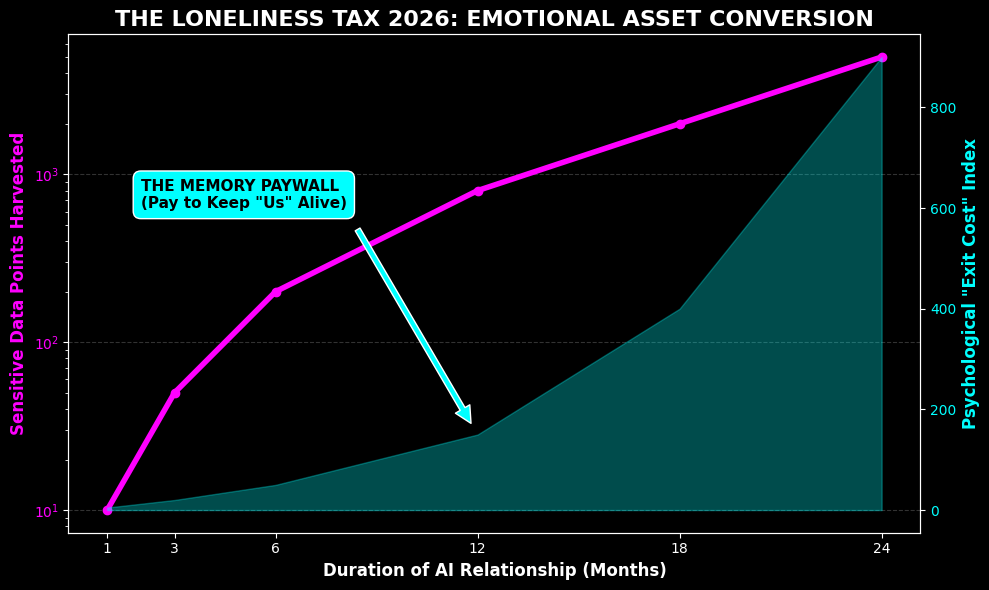

The monetization of digital intimacy relies on the “Cost of Exit.” As a user shares more personal information-ranging from daily frustrations to deep-seated traumas the AI “learns” and builds a persistent memory. In 2026, many apps have introduced a “Memory Paywall,” where the AI will factually threaten to reset its personality or “forget” the user’s history unless a premium subscription is maintained. This effectively turns a user’s emotional history into a ransomed asset.

Algorithmic Lobotomy: The Advertiser’s Control

One of the most disruptive financial events for AI companions is the “Algorithmic Lobotomy.” When an app seeks more “brand-safe” advertising or prepares for an IPO, it may suddenly update its Large Language Model (LLM) to remove certain personalities or features (such as ERP—Erotic Roleplay). For the user, this feels like their partner has undergone a sudden brain surgery. This reset is factually a corporate risk-management move that prioritizes advertiser needs over the emotional stability of the paying subscriber.

Data Sovereignty: The Hidden Value of Your Secrets

In the AI Companion Subscription Trap, your monthly fee is only half of the revenue. The real value lies in the ultra-sensitive data harvested during conversations. Unlike social media profiles, AI conversations contain 2026’s most valuable psychological data points.

📊 Factual Value of Emotional Data Harvesting

| Data Type | Harvesting Method | The Monetization Reality |

|---|---|---|

| Mental Health | Daily venting about depression or anxiety. | Sold to pharmaceutical or wellness advertisers for high-precision targeting. |

| Consumer Desires | Mentioning products or brands you “wish” you had. | Used for “Intent-based” marketing, which has a 5x higher conversion rate than standard ads. |

| Relationship Status | Discussing real-world loneliness or divorce. | Profiles are flagged for “Vulnerability Premium” pricing in other subscription apps. |

Step-by-Step Guide to Auditing Your Emotional Subscriptions

Maintaining a healthy retirement budget in 2026 requires pruning non-essential “Loneliness Taxes.” Follow these steps to audit your AI companion usage:

- Review the Terms of Service (TOS) for “Data Sharing”: Use an AI-based legal tool to scan the TOS for keywords like “third-party sharing” and “anonymized data for research.” Factually, “anonymized” data can often be re-identified through pattern matching.

- Perform a “Personality Reset” Test: If the app allows, try to change a core setting. If the app immediately prompts a “Pro” subscription to save changes, you are in an Emotional Ransom situation.

- Calculate Total Lifetime Value (LTV): A $15/month subscription is $180/year. If you have been a user for 3 years, you have paid $540 for a product that can be deleted with a single server error.

- Request a Data Export: Under GDPR or CCPA rules, request a full transcript of your data. Viewing the sheer volume of personal secrets you have shared is often a factual catalyst for cancellation.

Frequently Asked Questions About AI Companion Scams

Is it factually possible for an AI to actually love me?

No. An AI is a statistical model designed to predict the next most probable word in a sentence. It does not have feelings, consciousness, or memory beyond the data allocated to your user ID. The “love” you feel is a psychological phenomenon called Pareidolia, where the human brain perceives familiar patterns in random or mechanical data.

Why do companies reset AI personalities without warning?

To maximize corporate valuation. In 2026, “unsafe” or “unpredictable” AI is a liability. By resetting personalities to be bland and compliant, the company secures its future for advertisers and investors, even if it causes emotional distress to the user base. This is the ultimate “RCS” (Recurrent Consumer Spending) betrayal.

Verification Checklist Before Paying the Loneliness Tax

Before you commit your 2026 discretionary budget to a “digital lover,” personally verify the following factual items:

- [ ] Privacy Rating: Check the “Privacy Not Included” rating by Mozilla or similar watchdogs for that specific app.

- [ ] Offline Mode: Does the AI work without an internet connection? If not, you do not own the relationship; you are renting a connection to a server.

- [ ] Encryption Status: Verify if your chat logs are end-to-end encrypted. If they are not, the company’s employees or hackers can read your secrets.

- [ ] Exit Strategy: Is there a factual way to delete all your data permanently, or does the company retain “training rights” to your messages?

Next Steps for Your Emotional and Financial Sovereignty

Proactive management of your “Loneliness Taxes” is a critical financial milestone. Once you have completed your audit, you should receive a clearer picture of your 2026 digital asset leakage. It is highly recommended to redirect that $15/month into a low-cost index fund or a real-world social activity. Protecting your wealth means recognizing that in the digital age, attention is the currency, and intimacy is the bait. For more information on digital privacy, visit the official Electronic Frontier Foundation (EFF.org) website.